SaaS Vendor Due Diligence: Making Security Questionnaires Useful Instead of Performative

Priya Nair · April 12, 2026

Security questionnaires have become a ritual that burns weeks on both sides. Learn how to tier vendors, reuse attestations, focus on residual risk, and build a due diligence rhythm that actually improves your SaaS governance posture.

Every enterprise security team knows the pattern: a business unit wants a promising SaaS tool, procurement accelerates the contract, and someone forwards a hundred-row spreadsheet labeled “vendor questionnaire” two weeks before signature. Vendors paste last year’s answers; your analysts re-key the same follow-ups; executives wonder why “security review” still blocks revenue. The process is not irrational—regulated firms must evidence oversight—but it is often decoupled from actual risk. When questionnaires become a box-checking theater, organizations miss the handful of integrations that truly warrant deep inspection while drowning in paperwork for low-impact utilities.

Start with tiering, not templates

Effective due diligence begins by classifying the application against data sensitivity, regulatory scope, and technical touchpoints. A marketing newsletter platform that stores no customer PII should not travel the same path as a customer support suite with full transcript history and SSO into your identity provider. Publish a short policy that maps tiers to minimum evidence: Tier 1 might require only a privacy policy review and subprocessors list; Tier 3 might mandate architecture diagrams, pen test summaries, and custom API scoping workshops. When business sponsors understand the rules up front, they bring security in earlier instead of treating review as a surprise gate at the end.

Tiering also calibrates how much you trust attestations versus bespoke testing. A vendor with a current SOC 2 Type II and clear data flow documentation may satisfy most of your control questions without a custom doc. Save deep dives for vendors that process regulated data, connect broadly through integrations, or train models on customer content. The goal is to concentrate expert time where marginal risk reduction is highest.

Reuse intelligence across the portfolio

Most questionnaire answers do not change quarter to quarter. Maintain an internal library of vendor responses mapped to control frameworks—ISO clauses, NIST CSF functions, or your own control IDs—so analysts can diff new submissions against prior years. When a vendor updates subprocessors or data residency, you review deltas instead of rereading the entire file. For widely used platforms, participate in industry groups that publish standardized answers; pushing every buyer to invent unique wording wastes everyone’s time without improving outcomes.

Pair document reuse with continuous monitoring signals. Annual questionnaires miss incidents that happen on week six. Subscribe to vendor trust portals, breach notifications, and configuration drift alerts where available. Discovery platforms that inventory OAuth grants and API scopes can flag when a “low tier” app suddenly gains broad directory access, triggering a targeted reassessment without waiting for the next procurement cycle.

Ask questions that reveal architecture

Generic prompts like “Do you encrypt data at rest?” produce useless yes answers. Prefer scenario-based items: describe how tenant isolation works in multi-tenant clusters, how keys are rotated, and how customer admins can revoke compromised sessions globally. Ask how the vendor segments production from support access and what logging you can export to your SIEM. These answers expose whether the vendor has thought through operational reality, not just marketing claims.

- Data flows — Require diagrams that show ingress, storage regions, backup targets, and analytics pipelines. Shadow copies in unexpected regions are a common finding.

- AI and ML — If features send customer content to models, clarify training opt-out, retention windows, and human review policies.

- Exit readiness — Export formats, API rate limits, and deletion SLAs matter as much as onboarding speed.

Operationalizing the rhythm

Embed vendor review into a quarterly governance forum with procurement, legal, and business leadership. Present a dashboard of in-flight assessments, overdue renewals lacking refreshed attestations, and vendors that exceeded materiality thresholds for spend or usage growth. Executives should see risk and velocity together—otherwise security is cast as the department of “no.” Celebrate teams that engage early; measure cycle time reduction when tiering works.

Cross-border and industry-specific overlays

Questionnaire templates written for a U.S. headquarters often miss obligations that appear when European employees process health data or when APAC subsidiaries store payroll in regional tenants. Maintain addenda for major jurisdictions: Schrems-era transfer mechanisms, India’s digital personal data protection expectations, and sector rules for finance or healthcare. Vendors appreciate clarity about which annex applies; buyers avoid last-minute legal escalations that freeze signatures.

Industry regulators increasingly ask how third-party SaaS failures would propagate into your control environment. Map critical SaaS dependencies in business impact analyses the same way you map data centers. When questionnaires surface single points of failure—such as a lone identity bridge or a batch exporter with excessive scope—capture remediation in the same ticket system you use for internal vulnerabilities so accountability persists after procurement closes.

Measuring program quality

Volume metrics like “questionnaires completed” mislead. Prefer outcome metrics: percentage of spend covered by assessments refreshed within policy windows, count of high-tier vendors without compensating controls documented, and time from intake to evidence-based decision. Pair quantitative dashboards with qualitative sampling—periodically read full files for a random vendor to ensure analysts are not rubber-stamping recycled answers without critical reading.

Train new reviewers with paired audits: a senior analyst marks up a junior’s notes, focusing on whether follow-up questions would have changed risk ratings. That investment pays off when teams scale across regions without diluting standards. Finally, publish a lightweight RACI so business sponsors know when they must attend architecture reviews versus when security can operate asynchronously. Ambiguity slows every program; clarity compounds velocity.

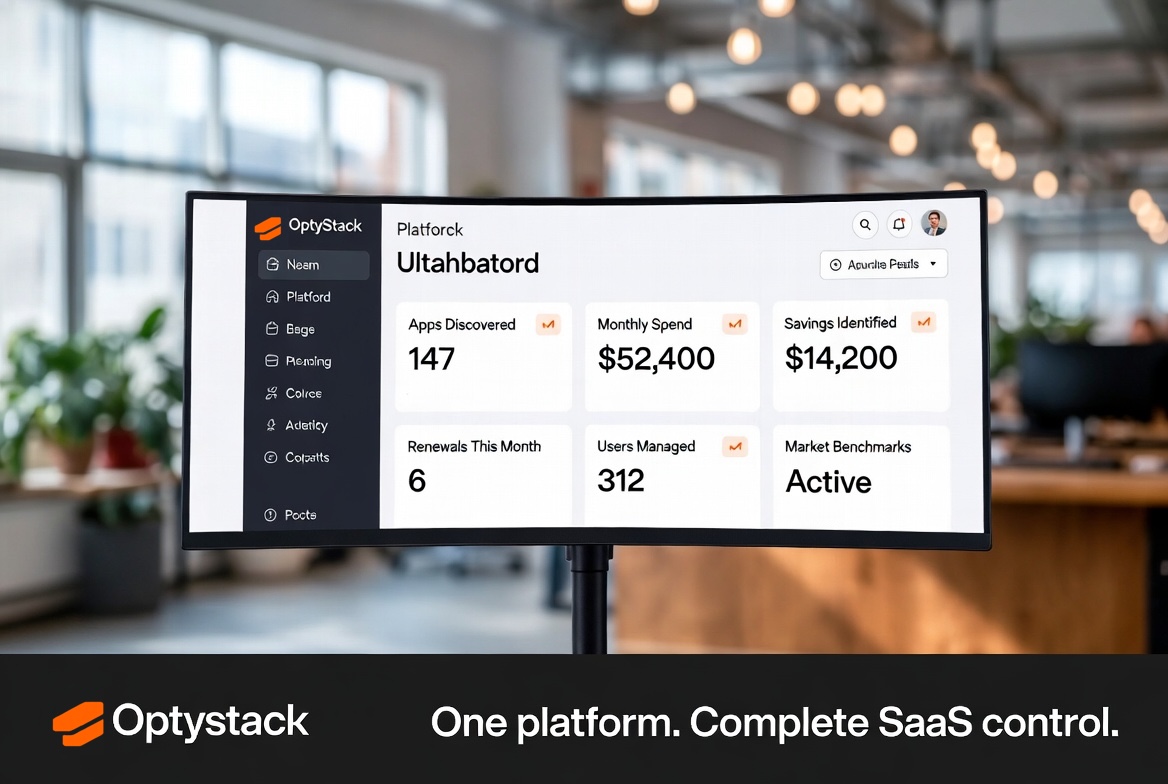

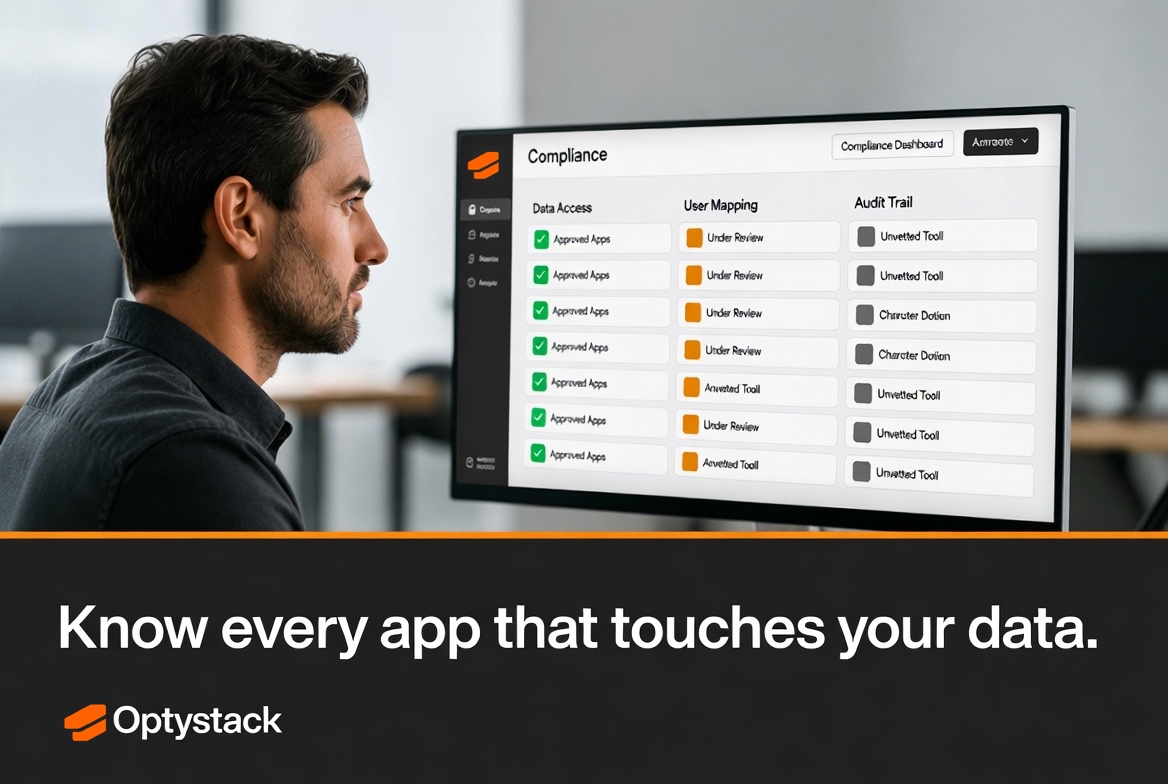

OptyStack helps organizations see which SaaS applications are actually in use, who sponsored them, and how entitlements evolved over time—context that turns questionnaires from abstract paperwork into targeted conversations. When due diligence connects to live signals, you spend fewer hours on theater and more on the vendors that truly shape your security posture.

Maturity indicators

Immature programs measure success by questionnaires completed. Mature programs measure residual risk: count of Tier3 vendors without current testing, percentage of spend covered by refreshed attestations, and mean time to remediate a failed control. Tie those metrics to executive dashboards so funding for automation and headcount follows demonstrated gaps, not anecdotal urgency. Over time, the objective is not more forms—it is faster, evidence-based trust in the SaaS portfolio your employees already depend on.

Finally, invest in vendor relationships, not just audits. Security champions on the vendor side will warn you about roadmap changes that affect data handling; purely transactional questionnaires rarely build that channel. A balanced program combines structured assessment with human partnership—especially for strategic platforms that will anchor your stack for years.

Red teaming the questionnaire itself

Periodically ask whether your template still pulls the right evidence or merely repeats last decade’s concerns. Red team it with engineers who understand modern attack paths—supply-chain compromises, OAuth consent phishing, and model-poisoning risks for AI features. Update sections when regulators publish new guidance; stale templates signal to vendors that your process is ceremonial. Keep version history so you can explain to auditors why a 2026 question replaced a 2022 one.

Balance depth with speed: extremely long forms encourage vendors to outsource answers to junior staff who lack context. Shorter, scenario-driven questionnaires answered by accountable executives often yield higher signal. The objective is defensible assurance, not maximal paperwork.

Closing the loop with procurement and legal

Security findings should feed standardized contract clauses: subprocessors notification timelines, encryption standards, subprocessors change approvals, and incident cooperation. When questionnaires identify gaps, translate them into enforceable language instead of informal “we will fix it” emails. Legal appreciates concrete exhibits; vendors appreciate knowing non-negotiables before commercial terms finalize.