A Practical Governance Framework for Generative AI Procurement

OptyStack Team · April 4, 2026

Employees will adopt AI assistants with or without a policy. This framework helps legal, security, and procurement evaluate vendors, data handling, and pricing models before enterprise-wide rollout.

Generative AI arrived through side doors: browser tabs, free tiers, and shadow API keys. Enterprise procurement was built for multi-year ERP deals, not monthly token bills that scale with usage. A workable governance framework accepts that experimentation is inevitable while steering the organization toward vendors that meet legal, security, and resilience bars.

Define non-negotiables first

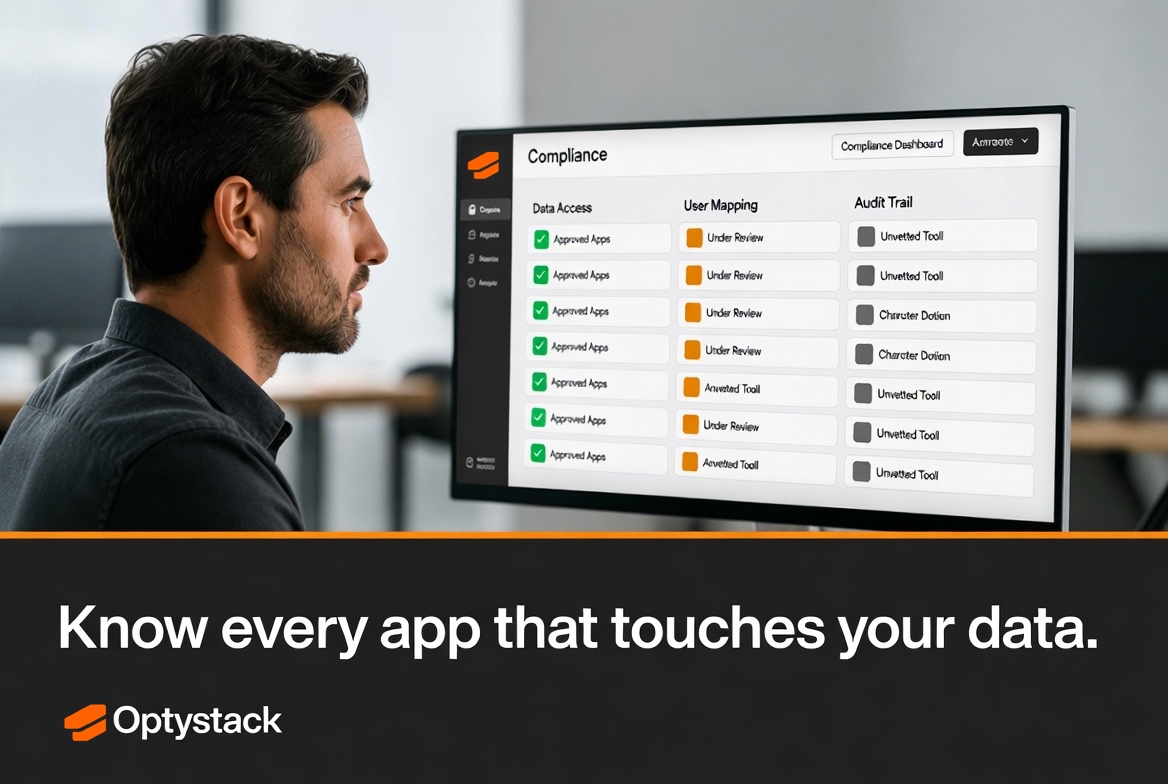

Before evaluating logos, agree on constraints: allowed data classes for model training (or lack thereof), geography of inference and storage, subprocessors, audit rights, and incident notification SLAs. Security should publish a short “approved patterns” memo—e.g., enterprise keys only, no pasting regulated data into consumer chatbots—so employees know the guardrails.

Procurement then maps those constraints to contract terms: usage-based pricing caps, commitment refunds, and clarity on whether the vendor may train on customer prompts. Legal reviews shrink when RFPs already embed your red lines.

Trial and land patterns

Employee-led pilots are valuable signals of demand. Capture them in a lightweight intake: business sponsor, intended use case, estimated monthly usage, and exit criteria. When a trial graduates to paid, finance should see the same SKU in the procurement catalog—not a surprise invoice from a new entity name on the card feed.

- Centralize API keys — Rotate keys tied to individuals; use service principals with scoped permissions.

- Monitor adoption — Track active users and token burn against business outcomes to avoid shelfware at LLM prices.

Continuous review

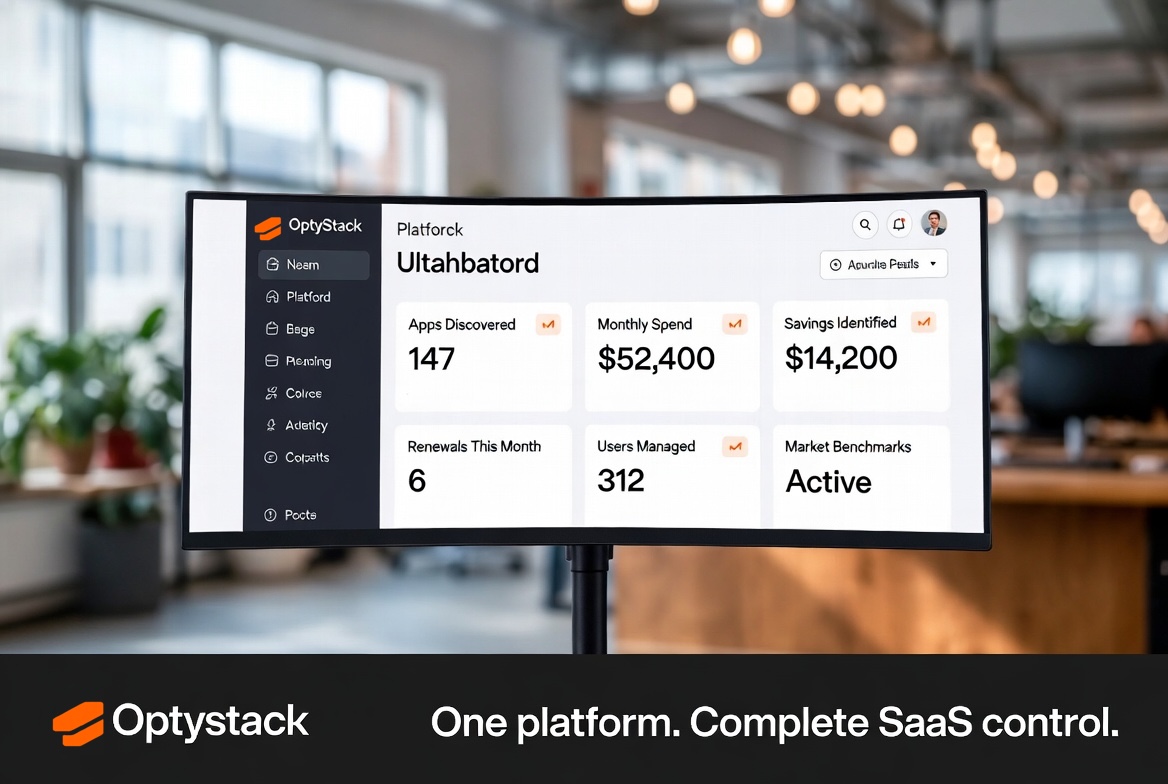

Model capabilities change weekly; governance must be a loop, not a one-time policy PDF. Quarterly, reassess vendors against updated threat models and regulator guidance. OptyStack helps organizations discover shadow AI usage and align it with sanctioned paths—so innovation accelerates inside boundaries everyone understands.

Vendor and model diversity

Enterprises rarely standardize on a single LLM provider; teams experiment with specialized models for code, summarization, or customer support. Your framework should allow multiple vendors under consistent data rules rather than forcing a monoculture that drives shadow usage. Publish a short list of approved families with required configuration—enterprise tenancy, logging, and regions—so engineering does not have to escalate every experiment.

Finance should see token or seat economics in categories that match budget planning: engineering R&D versus customer support versus internal G&A. When costs spike, leaders can ask whether usage matched business outcomes or reflected unfettered experimentation—without shutting down innovation by default.